Following her insightful SCItalk on Diversity, Equality and Inclusion, Ijeoma Uchegbu, Chair in Pharmaceutical Nanoscience at UCL's School of Pharmacy speaks with Darcy Phillips.

Tell us about your career path to date.

I trained as a pharmacist and then did a PhD at the School of Pharmacy on drug delivery using nanosystems. After about two years of postdoctoral scientist work, I was appointed to a lectureship at the University of Strathclyde in Glasgow. After five and a half years, I was appointed to a professorship in 2002. I then joined the School of Pharmacy as a Professor of Pharmaceutical Nanoscience in 2006 and UCL in 2012.

My work has focused on understanding how drug transport may be controlled in vivo using nanoscience approaches. I co-founded Nanomerics Ltd. with my long-term collaborator – Professor Andreas G. Schätzlein – and next year will see Nanomerics take the technologies developed in academia into clinical testing. This is a huge milestone for our small company and for us personally. I liken this milestone to sending your only child off into the big wide world, and so we are understandably nervous and excited in equal measure!

Science was a refuge for me as I moved countries as a teenager – from London, the city of my birth, to a small town in Nigeria called Owerri. Science subjects were the only subjects that were common on the secondary school curricula of both countries. I really had no other option. I fell in love with science because it was familiar.

Which aspects of your work motivate you the most?

The joy of discovery really gives one a high and this is what I enjoy the most. Validation of one’s discoveries by other members of the scientific community cements the high and when one’s ideas are evidenced first by experimentation and then appreciated by one’s peers, there is no other feeling in the world quite like it.

What has been your proudest achievement?

Getting my professorship so soon after my appointment to a lectureship at the University of Strathclyde is up there with the greatest moments of my career, as is bringing up my daughters at the same time. Oh dear – there are far too many moments to mention, to be honest! Every day I don’t get a rejected paper or grant is really a proud day. Rejections are 90% of a scientist’s life.

You spoke recently at our SCItalk on diversity, equality and inclusion and the importance of data. Could you give us a summary on why this is so important?

To produce good quality science outputs with the maximum impact we need a variety of individuals asking and answering the most profound of research questions. We need more data on diseases and conditions that affect women and more data on the genomics affecting the global southern majority. We need answers to the pressing questions on health outcomes in the poorest in our UK society. Well, you get the picture. We need high quality data on these largely forgotten issues.

What do you think are the next key steps to making STEM more diverse and inclusive?

We first need to recognise that a problem exists. This is the first step. The data on underrepresentation needs to be at the forefront of our thinking when we are making decisions. We need funders to acknowledge the deficit in the current ways of doing things and then commit to act appropriately. The oddest thing about a skewed and unequal system is that we all lose out when there are entrenched inequalities. Even those that think that they are gaining from the current system are not.

From government grants to analysing your own carbon footprint, energy-efficient measures could reduce the environmental impact of your SME and save you money. Retail Merchant Services explained some of the changes you could make.

Which measures could Small and Medium-sized Enterprises (SMEs), especially energy-intensive businesses, take right now to reduce their carbon footprints?

- Look at the sustainability of the products you are creating. Can you use renewable materials? Or, if you get materials from another supplier, can you check their eco-credentials, and swap if they’re not doing what they can to go greener? Can you streamline the process so that you reduce waste wherever possible?

- Create a recycling policy. If you can’t reduce the amount of waste that you’re generating, you should aim to make sure that it can be disposed of sustainably. You could look at your shipping supply chain. If your business sends out physical items, make sure to check the packaging you’re using – can it be recycled? Are you using too much?

- Can you switch to a renewable energy supplier, or generate your own renewable energy? While it’s not right for everyone, it can also be worth considering if there are any employees in your business who can work from home on some days. This removes the carbon footprint of their journey to work.

The Smart Export Guarantee Scheme pays some SMEs for producing their own renewable heat and power. Not only will this allow you to generate your own electricity, which can be useful in the current climate of fluctuating costs, but you can earn money from this too.

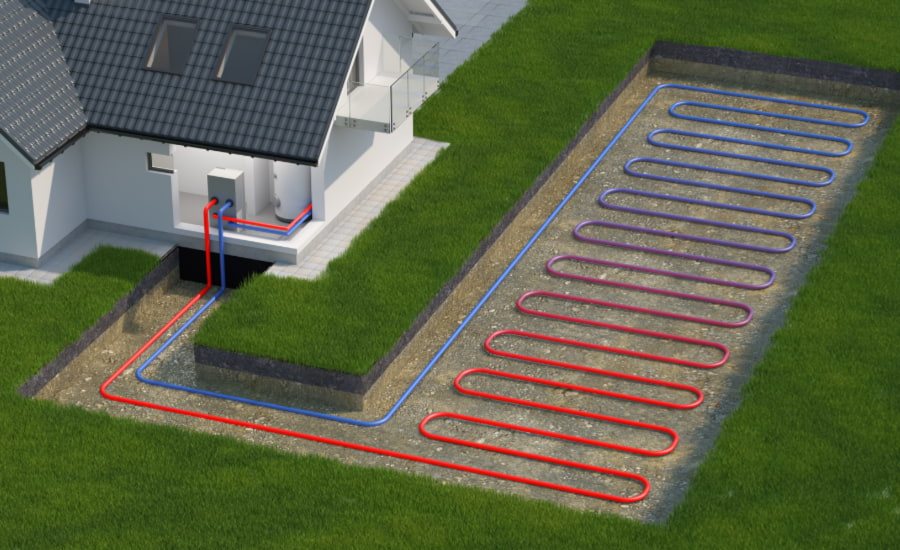

The Clean Heat Grant is a government-backed grant that rewards companies who use green heating technologies like heat pumps, and the Green Gas Support Scheme is intended to increase the amount of green gas in the National Grid.

The amount that SMEs can benefit from these schemes may depend on the amount of money that they have available to buy renewable technology, or the space to put items like heat pumps. If this is likely to be a barrier, then they may find smaller local schemes more useful.

Do you have any tips for companies calculating their carbon footprints? What are the potential benefits of this?

Take your time – understanding your carbon footprint isn’t an overnight process. You may find it beneficial to use an online carbon footprint calculator, or contact a sustainability expert to help.

You’ll need to consider three types of emissions:

- Direct emissions – the emissions that your company is directly responsible for, such as fuel for company cars.

- Energy indirect emissions – emissions from utilities that you don’t directly control, such as electricity and gas

- Other indirect emissions – everything else that is connected to your business activities, such as employee travel, shipping, and the whole supply chain.

Understanding your carbon footprint is important to help you know where you can improve and cut down on your emissions. Not only does this help the planet, but it’s also a tangible demonstration that you care about the environment, which can be attractive to sustainably-minded customers.

The initial outlay for heat pumps and other technologies are steep, but this investment may pay off in the longer term.

What are the benefits of aggressively pursuing net zero and what are the drawbacks?

Of course, the primary benefit of pursuing net zero is that it helps the planet. Business waste has a huge impact on the environment and, as a result, any changes that can be made in this sector will have a big impact too. However, going net zero can also potentially make your business more profitable too.

Your profits may go up for several reasons. First, it’s more appealing to customers. As part of going net zero, you’re likely to adjust your products to be more eco-friendly. And with reports showing that 63% of millennials are willing to pay more for sustainable products, this could make your business more appealing.

Second, it could save you money. You may find that examining your processes and policies to make them greener will allow you to benefit from specific tax cuts, or simply improve the efficiency of your company. In time, this could save you from wasting money as well as energy.

Third, it could boost your competitiveness. Small companies often find they just can’t match big businesses for price, so it’s important to find a selling point that allows you to remain competitive. As mentioned before, customers are increasingly looking for more ethical products, so being able to say that you’re net zero could help you beat the competition.

Finally, it could prepare you for new policies. Governments around the world are under pressure to go greener, and so they’ll likely transfer this pressure to businesses. Going green now means you’ll be ahead of the curve and able to make these changes at your own pace, rather than having to rush and pay to make them all at once.

While these are all amazing benefits, one of the biggest challenges that SMEs face is the cost of going net zero. It’s not cheap in the current economic climate, especially if you’ve got big changes to make. According to research, 40% of SMEs said that high cost and lack of budget were the biggest net zero blockers.

Electric vehicles require less maintenance – and you don’t have to pay road tax.

What are the benefits of moving your vehicles to electric right now, and what are the drawbacks?

There’s no denying that electric vehicles are significantly better for the environment than conventional cars. For companies looking for a relatively straightforward way to go greener, electric cars can be a great choice.

As well as swerving rising fuel prices, EV owners don’t currently pay vehicle tax in the UK. Additionally, they have fewer moving parts, and so require less maintenance. All of these factors mean that while EVs can be an expensive initial investment, they generally cost less to run in the long term.

With the UK government banning new petrol and diesel vehicles from 2030, investing in electric vehicles now means that SMEs can get ahead of the rush that is likely to come as we get close to the deadline. There is already a year’s wait time for some vehicles, so ordering your fleet now could mean that you avoid an even longer queue further down the line.

Of course, many SMEs feel unable to commit to electric vehicles right now due to the cost of living – they’re an expensive purchase. If this is the case, you could consider changing one vehicle at a time, and looking to see if you’re eligible for any local grants that can support you with the cost of this.

How much have inflated energy costs undermined the push for net zero?

Unfortunately, rising energy costs have meant that small businesses are feeling the pinch, and might struggle to make new eco-friendly changes, as they are often costly. For many, their focus is simply remaining profitable.

However, what is also clear is that for those that can afford it, examining your business for changes that will allow you to move towards net zero can also be a way of saving money in the long run.

If you’re able to produce your own renewable energy (for example, getting solar panels on the offices), you may be able to mitigate some of the effects of rising energy costs, as you won’t be reliant on the National Grid.

Finally, apart from energy efficiency schemes, how could the government help reduce the carbon footprint of SMEs?

As well as energy schemes, the government can help by providing information and resources on sustainable practices. By sharing best practices widely with businesses, and offering them a place to go to get support, the government can help them develop more environmentally friendly operations.

Additionally, they can help by creating incentives for businesses to go green. By offering tax breaks or other financial incentives, the government can encourage businesses to adopt sustainable practices.

Written by Retail Merchant Services. The SME Environmental Impact Guide can be read in full.

Edited by Eoin Redahan.

As we speak, apples and pears are ripening on the trees. But how do you grow apple and pear trees from scratch and keep them alive? Our resident gardening expert, Professor Geoff Dixon, investigates.

Autumn is the ‘season of mists and mellow fruitfulness’, as John Keats said in his Ode to Autumn. It is a time for harvesting temperate tree fruits, especially apples and pears in gardens and orchards.

This fruit is distinctive and delicious. The ‘Sunset’ apple cultivar, derived from the Cox’s Orange Pippin, produces red and gold striped fruit and sweet tasting flesh, while the French pear cultivar doyenne du comice has the most superb taste if caught at peak ripeness.

<

<

The sunset apple.

Both apples and pears ripen after harvesting, emitting ethylene and passing through a climacteric, or critical biological stage. When respiration reaches a peak, the fruits’ flavour is most satisfying.

Both apples and pears are best planted in late autumn or during winter when the trees are dormant, either as container-grown or preferably bare root trees. Place each tree in a hole that is large enough for the entire root system, ensuring that the graft union sits well above the soil level.

Each tree consists of two parts: the rootstock, selected originally from wild species, and the scion, which is the fruiting cultivar. Apple cultivars mostly dwarf Malling no. 9, and pear scions are grafted onto quince rootstocks. A stake should be driven into the hole before putting the tree in place.

Pour ample water into the hole, keeping the roots wet. Do so again once soil is replaced and firmed round the tree. As the tree establishes and produces leaves and flowers, water well and regularly, especially during dry periods.

French pear cultivar doyenne du comice

Feed with fertilisers that contain large amounts of potash and phosphate but minimal nitrogen. This encourages vigorous root growth. Sprinkling compost or farmyard manure around the tree helps retain soil moisture.

Climate change is having significant deleterious impacts on all members of the Rosaceae family, including apples and pears. Australian studies indicate that temperatures are reaching higher than the evolutionary maximum for these species.

This stresses the plants. It adversely affects their health and performance and reduces their ability to store carbon and produce fruit crops.

The pear scab Venturia pirina

Levels of pest and disease infections are increasing. In particular, sap-sucking woolly aphids (Eriosoma lanigerum) have increased from minor to major apple pests in the last decade. Pear scab Venturia pirina has become a major cause of defoliation.

Chemical control options for both are limited, but regular drenching sprays with seaweed extracts may reduce their impact. Seaweed extracts additionally provide some foliar absorbed nutrients and increase the visual quality of fruit.

Professor Geoff Dixon is author of Garden practices and their science, published by Routledge 2019.

There is still work to be done to redress racial inequality in chemistry, and across science in general, but relatable role models can have a positive influence on the next generation.

Homophily. Ever heard of it? Me neither, until 30 minutes ago. Homophily basically means that we are more likely to connect with people who are similar to us in some way.

In work terms, homophily could be a relatable role model. So, as an Irish science writer, I admire Flann O’Brien for his ability to decongest complicated subjects with such wit and flair (not so much for hiding whiskey in the toilet during interviews). For a young chemist, a role model could be someone from a similar background who excels in a job she or he would love to have.

But what happens if you just don’t see relatable role models in your chosen field? What if systemic failings make the profession less attractive and harder to trace the path to success?

Unfortunately, systemic failings, the relative lack of homophily, and pervasive inequality were among the findings of Missing Elements – Racial and ethnic inequalities in the chemical sciences, a report released by the Royal Society of Chemistry (RSC) in March.

The report highlighted the barriers facing Black chemists in the UK, and it certainly didn’t hold back. In the Foreword, Dr Helen Pain, RSC’s Chief Executive, said: ‘The data and evidence collected in this report are clear: we are failing to retain and nurture talented Black chemists at every stage of their career path after undergraduate studies.’

The report found that just 1.4% of postgraduate students, 1% of non-academic chemistry staff, and 0% of chemistry professors are Black. It added that Black chemists face barriers in industry too, and that people from minoritised communities are under-represented at senior levels across the workforce.

It proceeded to mention six themes that affect the retention and progression for Black chemists, including the impact of homophily, which it defined as ‘the tendency for people to form connections with people similar to themselves.’

The importance of mentors

When I read that, a little bell chimed in my head. When my colleague Muriel Cozier interviewed three eminent Black chemists last year – Cláudio Lourenço, Jeraime Griffith, and Dr George Okafo – each mentioned the need for relatable role models to increase the representation of Black chemists.

When she asked Cláudio about specific impediments that prevent young Black people from pursuing chemistry, he said: ‘I think one of the biggest barriers that prevent people from pursuing careers in science is the lack of role models. If we only show advertisements for chemistry degrees with White people, it’s not encouraging for Black students to pursue a career there.

‘The same goes for when we visit universities; role models are needed. No one wants to be the only Black person in the department. Universities need to embrace diversity at all levels.’

George made a similar point. He emphasised the need for young chemists to surround themselves with mentors. ‘I think it is important to look for role models from the same background to help inspire you.’ When Muriel asked him which steps could be taken to increase the number of Black people pursuing chemistry as a career, he added: ‘Have more role models from different backgrounds. This sends a very powerful message to young people studying science reinforcing the message… I can do that!’

When asked about his message for Black people following in his footsteps, Jeraime said: ‘Seek out mentors, regardless of race, who can help you get there. Don’t be afraid to email them and briefly talk about your interest in the work they’ve done, what you have done, and are doing now.’

Jeraime also cited lack of representation as a barrier that prevents more young Black people from entering chemistry. ‘Lack of representation I think is the number one barrier,’ he said. ‘Impostor syndrome is bad at the best of times, but worse still if there’s no representation in the ivory tower.’

The issue of inequality in chemistry is large – far too large for a mere 752-word blog – but as we celebrate the achievements of Black chemists everywhere this week, it is clear just how much of a positive influence role models such as Cláudio, George, Jeraime, and countless others can have on the dreams and aspirations of young chemists.

>> Here are Cláudio’s, Jeraime’s, and George’s stories.

Written by Eoin Redahan and based on previous reporting by Muriel Cozier.

In his winning essay in SCI Scotland’s Postgraduate Researcher competition, Angus McLuskie, Postgraduate Researcher at the University of St Andrews, explains his work in replacing non-renewable and toxic feedstocks with novel sustainable catalytic processes to produce useful chemicals.

Each year, SCI’s Scotland Regional Group runs the Scotland Postgraduate Researcher Competition to celebrate the work of research students working in scientific research in Scottish universities.

This year, four students produced outstanding essays in which they describe their research projects and the need for them. In the first of this year’s winning essays, Angus McLuskie outlines his work in improving the production of urea derivatives and polyureas.

Would you risk your life for plastics and agrochemicals? You might not have to…

Urea derivatives hold a substantial global market, which is dominated by their use as fertilisers in the agrochemical sector, in addition to smaller-scale technical applications as glues, resin precursors, dyes and pharmaceutical drugs. Furthermore, polyureas are important protective coatings, with a global market exceeding £800 million a year.

Currently, urea derivatives and polyureas are produced on an industrial scale using highly toxic chemicals such as phosgene, (di)isocyanates and carbon monoxide. These reagents are detrimental to human health, as evidenced by the release of methyl isocyanate gas from the Bhopal Union Carbide factory in 1984, which led to thousands of deaths and a global outcry.

Phosgene was itself used as a battlefield chemical weapon in World War I, and is sourced from fossil-fuel-derived carbon monoxide. The result is a process with significant health and environmental impacts.

As part of a global drive to tackle climate change and move towards a circular economy, the objective of our research is to replace non-renewable and toxic feedstocks with novel sustainable catalytic processes to produce useful chemicals and materials.

>> More information about the Scottish Postgraduate Researcher competition.

In pursuit of greener methods, we have recently discovered synthetic methodologies, using a catalyst of manganese, to couple dehydrogenatively (1) methanol and (di)amines and (2) formamides and amines to make symmetrical (poly)ureas and unsymmetrical urea derivatives respectively (ACS Catal., DOI:10.1021/acscatal.2c00850).

Angus with his poster on Mn-Catalysed Dehydrogenative Synthesis of Urea Derivatives and Polyureas.

The only process byproduct, molecular hydrogen, is valuable in itself, and the non-toxic reagents of methanol or formamide can be sourced from renewable feedstocks. For example, Carbon Recycling International, an Iceland-based company, has developed methods to generate methanol industrially through the direct hydrogenation of CO2 (ATZextra Worldw., DOI:10.1007/S40111-015-0517-0). Formamides can be made from formic acid, which may be produced from biomass or CO2.

Synthesis approach

The synthesis of urea derivatives using this approach has been reported previously using iron and ruthenium catalysts, but these present individual limitations. Iron catalysts result in poor yields and substrate scope, while ruthenium catalysts are expensive and raise sustainability concerns due to ruthenium’s low abundance in Earth’s crust (Chem. Sci. J., doi.org/10.1039/C8SC00775F and Org. Lett., doi.org/10.1021/acs.orglett.5b03328).

The synthesis of polyureas via this approach has only been achieved before using a ruthenium catalyst. With a manganese-based pincer catalyst, we succeeded in making a broad variety of symmetrical and unsymmetrical urea derivatives as well as polyureas at high yields and under a low catalytic loading of 0.5-1 mol%. As the third most abundant transition metal in Earth’s crust, manganese is much cheaper than ruthenium, which improves the economic viability of the process for industrial applications.

Breaking new ground?

This is the first example of the synthesis of polyureas from diamines and methanol using a catalyst of an Earth-abundant metal. We have demonstrated for the first time the synthesis of a potentially 100% renewable polyurea from methanol and a renewable diamine Priamine, which is commercialised by Croda. This could be of interest to emerging businesses for making bio/renewable plastics.

Angus hopes his research will help us develop urea-functionalised agrochemicals and pharmaceutical drugs in a more efficient, greener way.

This initial proof of concept is exciting, but there are challenges to overcome for commercialisation. Evidently, the cost is important, and since the catalyst is much more expensive than reactants, such as amines and methanol, the cost is directly linked to the catalyst’s activity; a homogeneous catalyst that is non-recyclable and offers a turnover number of 100-200 makes the process expensive.

We are now focusing our efforts on enhancing the efficiency of the catalyst to increase cost-effectiveness, which will also allow us to make commercially important urea-functionalised pharmaceutical drugs and agrochemicals with greater efficiency and reduced impact on the environment, human health, and economy.

Are you thinking of filing a chemical industry patent in 2022? Anthony Ball, Senior Associate at patent attorney Abel + Imray, gave us the lowdown about what you need to know about the process, cost, and filing your patents in different countries.

I’ve developed a novel technology. How do I patent it, how long does it take, and how much could it cost?

The first step in patenting a novel technology is to file a patent application. The patent application must contain a description of the technology that you have developed in enough detail for others to work the invention. It also needs to contain some claims that define the protection you think you are entitled to. Before the application is filed, it is also important to sort out who the inventors are and who owns the invention.

The application is then examined, during which the Patent Office and you come to an agreement regarding the extent of protection that you are entitled to. Once the extent of protection is agreed, the patent will proceed to grant.

The application will be published around 18 months from filing. This allows competitors to see what you intend to protect. It usually takes longer for the patent to be granted (and so be enforceable) - usually from four to 10 years. For a UK patent which protects a chemical invention, the total cost might be around £10,000.

A separate patent is required for each country that you are likely to want to stop competitors using your technology. Obtaining patents in the most important markets might cost in excess of £50,000 for a chemical invention. Although this might sound like a lot of money, not all of this needs to be paid at the start of the process. Instead, it is spread out over a few years, with the biggest investment usually coming three years into the process.

You mentioned that you can obtain a patent for a compound, a formulation, or a process for synthesising compounds. Does the patent process and cost vary according to the type of product or the branch of chemistry?

The overall process – filing a patent application, the patent application being examined and then the patent being granted – is the same for all technologies. However, there are some issues faced in certain branches of chemistry (such as pharmaceuticals) which can be quite difficult to overcome, and are not faced as commonly in other branches of chemistry. Because of this, it can sometimes take longer for patents in these fields to be granted than in other fields of chemistry, and the costs can be higher.

In which scientific areas has there been a recent rise in patent applications and are any fields relatively under-represented by comparison?

Focusing on European Patent Applications, the chemical industry has been fairly strong recently. Pharmaceutical and biotechnology in particular saw relatively large increases in the number of European patent applications filed in 2020, although the number of patents in the organic fine chemical field slightly decreased.

I want to file my patent in several countries. What do I do, and how much do the costs vary, depending on the country? For example, how would the cost of a patent in the UK compare to one in the US?

If you wish to have a patent in several countries, the start of the process is the same as the one described earlier; a patent application is filed in one country. Then, the most cost effective way to extend the protection to other countries is usually to file a “PCT application” within a year of filing the original application. After a further 18 months, you can turn this PCT application into applications for most countries around the world, including Europe, the US, China and India.

Costs do vary between different countries. To use the example above, it might cost 50-100% more to obtain a patent in the US than in the UK alone. It is worth noting that a patent for the same technology from the European Patent Office might cost around the same as a patent in the US, but the patent from the European Patent Office can then be converted into a patent in each country in the EU, plus some others (including the UK, Norway and Switzerland). Unfortunately, it is difficult to be precise about costs, because they depend very much on the number and type of objections raised by the patent office examiners.

One other consideration is translations. For long applications (which can be quite common in some branches of chemistry), these can be expensive, adding thousands of pounds to the cost for obtaining a patent. One country in particular where a translation might be required, and is of growing importance in the chemical area, is China.

Patents from the European Patent Office are valid across the EU and in several other countries. | Editorial credit: nitpicker / Shutterstock.com

>> From patents to green chemistry and agrifood, we have some great events coming up. Find out more on our event page.

Is there anything chemists and chemistry industry professionals should be particularly mindful of when submitting patent applications in 2022?

Patent law is underpinned by a number of international agreements, which are hard to renegotiate. As a result, the law is actually very stable over time, and so the considerations in 2022 will broadly be the same as they have been in the past. Having said that, one important thing to bear in mind at the moment is the amount of data to include in the patent application.

There is a balance between filing as soon as possible (to prevent a competitor getting there first, and to minimise the chance of a disclosure of something that would make your technology unpatentable), and making sure that the application has enough data to show that the extent of protection that you are asking for is justified. In some cases, it is possible to present data to justify the scope of protection after the application has been filed, but recently many patent offices have made that more and more difficult.

As such, filing too early, and with only a small amount of data to support your claims, could result in a very narrow patent, which might potentially be easy to work around. It is very important to include enough evidence to show that at least the parts of your invention which have the most commercial interest (e.g. the most active compounds) show the technical effect which is mentioned in the patent application.

How much have the law and process around patents changed in recent years?

The law around patents and patent applications is always evolving, albeit slowly. The basics – that the technology must be new, not be obvious in view of publicly available knowledge, and have an industrial application – have remained the same for many years. Likewise, the basic process to obtain a patent, as described above, has not changed recently, but the minor details of that process are constantly being updated, for example to incorporate new technology (such as online filing of the application and supporting documents, and online publication of the application) and to improve cooperation between the patent systems of different countries.

An example of improved cooperation between countries is the Unified Patent Court (UPC), which is likely to begin hearing cases in 2022. Currently, patents have to be enforced in each EU country separately using the national court systems. The UPC will establish a common court system and allow a patent to be enforced in one court case, with the result being valid for the whole of the EU.

I have made a further development to my technology after filing my patent application. How can I protect my new development?

Once it has been filed, nothing can be added to a patent application. Because of this, if you want to protect a new development to the technology that is the subject of a patent application, then another patent application must be filed directed to the new development. The two applications will be treated separately, and so in order for a patent to be granted which protects the new development, the new development must satisfy all the criteria for patentability described above.

To read more from Abel + Imray on patents, visit: https://www.abelimray.com/

The world’s biggest ever survey of public opinion on climate change was published on 27th January, covering 50 countries with over half of the world’s population, by the United Nations Development Programme (UNDP) and the University of Oxford. Of the respondents, 64% believe climate change is a global emergency, despite the ongoing Covid-19 pandemic, and sought broader action to combat it. Earlier in the month, US President Joe Biden reaffirmed the country's commitment to the Paris Agreement on Climate Change.

It is possible that the momentum, combined with the difficulties many countries currently face, may make many look again to geoengineering as an approach. Is it likely that large scale engineering techniques could mitigate the damage of carbon emissions? And is it safe to do so or could we be exacerbating the problem?

The term has long been controversial, as have many of the suggested techniques. But it would seem that some approaches are gaining more mainstream interest, particularly Carbon Dioxide Removal (CDR) and Solar Radiation Modification (SRM), which the 2018 Intergovernmental Panel on Climate Change (IPCC) report for the UN suggested were worth further investigation (significantly, it did not use the term "geoengineering" and distinguished these two methods from others).

One of the most covered CDR techniques is Carbon Capture and Storage (CCS) or Carbon Capture, Utilisation, and Storage (CCUS), the process of capturing waste carbon dioxide, usually from carbon intensive industries, and storing (or first re-using) it so it will not enter the atmosphere. Since 2017, after a period of declining investment, more than 30 new integrated CCUS facilities have been announced. However, there is concern among many that it will encourage further carbon emissions when the goal should be to reduce and use CCS to buy time to do so.

CDR techniques that utilise existing natural processes of natural repair, such as reforestation, agricultural practices that absorb carbon in soils, and ocean fertilisation are areas that many feel could and should be pursued on a large scale and would come with ecological and biodiversity benefits, as well as fostering a different, more beneficial relationship with local environments.

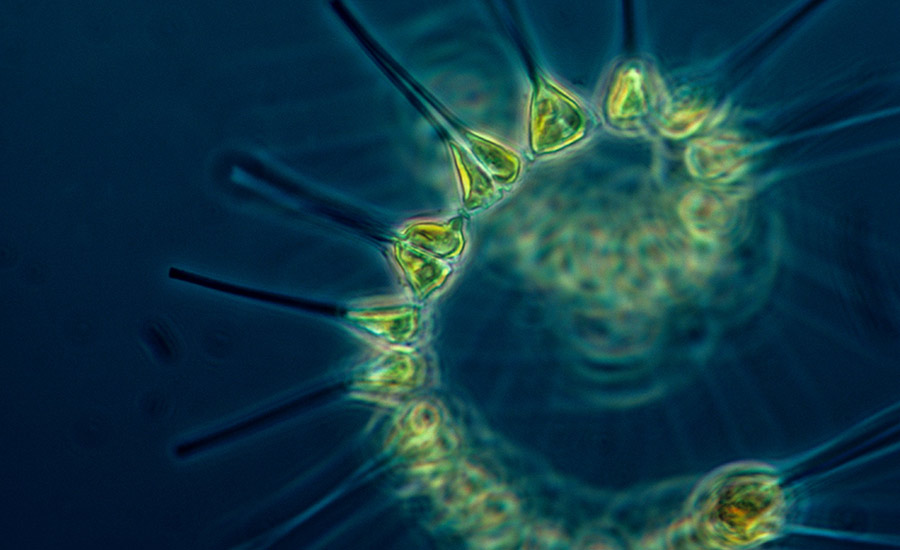

A controversial iron compound deposition approach has been trialled to boost salmon numbers and biodiversity in the Pacific Ocean.

The ocean is a mostly untapped area with huge potential and iron fertilisation is one very promising area. The controversial Haida Salmon Corporation trial in 2012 is perhaps the most well-known example and brings together a lot of the pros and cons frequently discussed in geoengineering — in many ways, we can see it as a microcosm of the bigger issue.

The trial deposited 120 tonnes of iron compound in the migration routes of pink and sockeye salmon in the Pacific Ocean 300k west of Haida Gwaii over a period of 30 days, which resulted in a 35,000km2, several month long phytoplankton bloom that was confirmed by NASA satellite imagery. That phytoplankton bloom fed the local salmon population, revitalising it — the following year, the number of salmon caught in the northeast Pacific went from 50 million to 226 million. The local economy benefited, as did the biodiversity of the area, and the increased iron in the sea captured carbon (as did the biomass of fish, for their lifetimes).

Small but mighty, phytoplankton are the laborers of the ocean. They serve as the base of the food web.

But Environment Canada believes the corporation violated national environmental laws by depositing iron without a permit. Much of the fear around geoengineering is how much might be possible by rogue states or even rogue individuals, taking large scale action with global consequences without global consent.

The conversation around SRM has many similarities — who decides that the pros are worth the cons, when the people most likely to suffer the negative effects, with or without action, are already the most vulnerable? This is a concern of some of the leading experts in the field. Professor David Keith, an expert in the field, has publicly spoken about his concern around climate change and inequality, adding after the latest study that, "the poorest people tend to suffer most from climate change because they’re the most vulnerable. Reducing extreme weather benefits the most vulnerable the most. The only reason I’m interested in this is because of that."

But he doesn't believe anywhere near sufficient research has been done into the viability of the approach or the possible consequences and cautions that there is a need for "an adequate governance system in place".

There is no doubt that the research in this field is exciting but there are serious ethical and governance problems to be dealt with before it can be considered a serious component of an emissions reduction strategy.