The world is heading towards a data explosion. Could DNA be the answer to our storage problems? Katrina Megget investigates

Estimates suggest we are generating more than 40 zettabytes of data each year – that’s more storage required than 40bn one terabyte hard drives. By 2025, this could be as much as 175 zettabytes. But our creation of data is growing faster than we can store it. In short, we have an impending data storage problem.

‘Current electronic data storage methods are not sufficient to hold the predicted flood of data. So, how and where will all this information be stored, if we run out of storage space?’ says Bill Efcavitch, Co-founder and Chief Scientific Officer of San Diego-based DNA synthesis firm Molecular Assemblies. ‘We think DNA could be an important part of the solution. If we could simulate how nature stores genetic information in the genome – essentially through microscopic DNA packages that compact massive amounts of data into a stable, easily replicable material – we can revolutionise data storage.’

The idea of using DNA to store mass volumes of digital data was initially floated in the 1960s, but since 2012 and 2013, when seminal papers were published, the concept has garnered broader scientific interest. While still in its infancy, the idea is picking up pace as the reality of a data storage problem becomes more apparent.

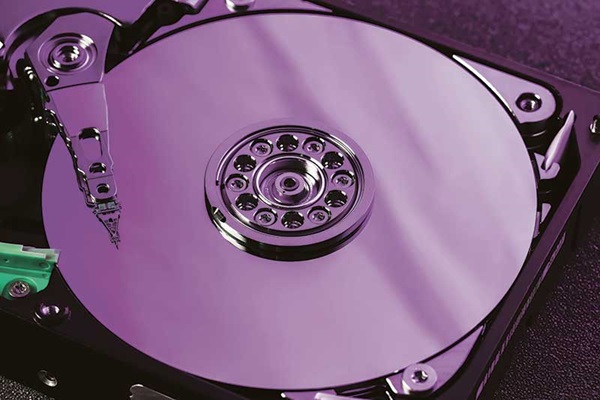

Digital information is currently stored in one of three ways. Magnetic storage, such as floppy disks and hard disk drives, uses a magnetic field to magnetise and demagnetise tiny sections on a spinning metal disk to represent the binary digital code of 0s and 1s.

If we can’t find technology to store these data, something will have to change – we might not be able to keep our cat photos

Nick Goldman European Molecular Biology Laboratory’s European Bioinformatics Institute

Meanwhile, optical storage, such as CDs and DVDs, uses a laser to scan the surface of a spinning metal and plastic disk, which is divided into tracks made up of flat areas and pits that either reflect or scatter the laser light and represent the binary code. The third, and increasingly common, type of storage is semiconductor or solid-state storage, such as random access memory (RAM) in computers and USB memory sticks. In this case, data are stored electrically in silicon chips, where the binary data are stored by holding an electrical current in a transistor with an on/off mode.

However, these traditional storage forms have shortcomings, namely they aren’t built for long-term storage – lasting for no more than 50 years, if that. With magnetic storage, for instance, data are recorded using a weak physical force, meaning it’s not particularly stable.

Of more concern, however, is the data explosion and impact on silicon chips. Henry Lee, postdoctoral fellow at the Wyss Institute for Biologically Inspired Engineering at Harvard University, US, points to a 2016 paper (V. Zhirnov, et al, Nature Materials, 2016, 15, 366) that suggests the amount of data generated will soon exceed the amount of silicon mined for silicon chips. Indeed, there may be no more computer-grade silicon available by 2040.

Furthermore, there are issues with available storage space and resource use with current forms. All of Facebook’s data centres around the world, for instance, cover a total area of about 15m ft2. Lee cites an open access journal article (A. Andrae & T. Edler; Challenges, 2015, 6, 117) that claims a worst-case scenario could see these sorts of cloud storage data centres requiring around 26% of the world’s electricity by 2030.

Nick Goldman, head of research and senior scientist at the European Molecular Biology Laboratory’s European Bioinformatics Institute in Cambridgeshire, UK, ran some numbers in 2019 on projections of data growth and found the equivalent of the earth’s surface would be taken up by data centres within 75 years. ‘There is clearly an issue,’ he says. ‘If we can’t find technology to store this data something will have to change – we might not be able to keep our cat photos.’

It’s estimated we are generating more than 40 zettabytes/year of data – that’s more than 40bn one-terabyte hard drives. By 2025, this could be as much as 175 zettabytes.

A 2016 paper in Nature Materials suggests the amount of data generated will soon exceed the amount of silicon mined for silicon chips. Indeed, there may be no more computer-grade silicon available by 2040.

DNA potential

This is where deoxyribonucleic acid comes in – a compact, durable and easily replicable information storage medium that has been used to store biological information in the form of genes for millions of years. The advantages as a digital data storage medium are many. For starters, DNA is incredibly dense, requiring up to 100 times less physical storage space by volume than current storage technologies. Theoretical estimates suggest 1g of DNA could store about 200 exabytes – 200m terabytes – of data. Or put another way, the amount of DNA in the human thumb could store the equivalent information on more than a million digital thumb drives. Some say the whole accessible internet could fit in the size of a shoebox. The other advantage of this smaller size is it would require less energy to store.

DNA is also incredibly stable, made from strong chemical bonds. ‘In the wild, DNA can sometimes preserve information for thousands to hundreds of thousands of years,’ says Karin Strauss, principal research manager at Microsoft and affiliate professor at the Paul G. Allen School of Computer Science and Engineering at the University of Washington, US. ‘Scientists have been able to reproduce long-term storage of data in DNA in synthetic conditions, showing evidence of DNA being able to keep information for the equivalent of thousands of years.’

Furthermore, DNA can easily be copied, easily read, allows random access and can be stored in various ways from being frozen to encased in different materials. It is also unlikely to become obsolete as a storage medium while there is still interest in biology and genetics.

‘DNA storage offers a real possibility because the technology exists now,’ says Robert Grass, professor at the Institute for Chemical and Bioengineering at Switzerland’s ETH Zurich. ‘This is also really interesting because it combines two scientific disciplines – biology and computer science – where we are using biology to store digital data.’

To date, a variety of data have been stored in DNA and retrieved, showing it can be done, from all of Shakespeare’s 154 sonnets, the 1895 French film Arrival of a Train at La Coitat, to all 16GB of Wikipedia’s English-language text and Martin Luther King Jr’s speech: ‘I have a Dream’. Most recently, Grass worked with San Francisco-based DNA synthesis firm Twist Bioscience to store in DNA the first episode of the Netflix biotech thriller show Biohackers. The 46-minute digital file consisted of approximately 70MB.

‘First, we convert the digital data of 0s and 1s into the DNA bases A (adenine), T (thymine), C (cytosine), and G (guanine). These molecules are then synthesised to a specific sequence based on the digital data and suspended in liquid. Then when reading the information back, we recover the DNA, sequence the ATCGs and the computer translates this back into 0s and 1s, which can then be interpreted digitally,’ explains Grass.

According to Twist’s Chief Executive, Emily Leproust, the binary code of 0s and 1s is converted into bases. Although not the encoding algorithm used by Twist, one simplistic way to explain, says Leproust, would be to have 00 to equal A, 01 to equal C, 10 to equal G, and 11 to equal T. Twist encodes the DNA data file into short segments of DNA about 200-300 bases long that can be synthesised and stored. ‘In addition to storing part of the data file, each short segment contains an index to indicate its place within the overall data file. [This] allows part of the file to be biologically recovered before sequencing, in other words random access, so only data of interest is sequenced.’

But DNA is error prone. Leproust notes that error-correcting algorithms are therefore applied to ensure data recovery is error free. Grass adds that the idea is to include redundancies: ‘If you scratch a DVD, you can still read it because redundancies have been added. It’s the same concept.’

Streamlining storage

In 2012, when Goldman stored an MP3 recording of part of Martin Luther King’s famous speech, he used a different method, noting that repetition of a single base can be easily misread. He instead converted the binary code into a ternary code of 0s, 1s and 2s and then developed a base-coding scheme depending on the previous base. So, if the preceding base was a T and the next numeral was a 2, this would be encoded as a G, but if the preceding base was an A, then the 2 would be represented as a T. This meant the code never used any repeated bases like AA or GGG, which would make the DNA easier to synthesise and be read correctly.

Each DNA segment was 117 bases long, with 17 of those bases dedicated to indexing the segment. Chopping the DNA several times in different, overlapping ways also aimed to build in error-correction. ‘Recoding 0s and 1s into bases is done in different ways by different labs and companies,’ he says. ‘There isn’t a best way to do it yet but there is now a lot of research into this space.’

One of the main pinch points preventing widespread use is the cost – currently this sits at about $1000/megabyte. At Harvard’s Wyss Institute, Lee is looking to cut the cost by using a different method to synthesise the DNA to make it faster. Instead of traditional organic synthesis, Lee is placing his bet on enzymes and hopes to bring the cost of DNA storage down one million-fold or more.

Lee’s team uses a template-independent DNA polymerase, which is electronically controlled for de novo DNA synthesis without the need for a pre-existing strand of DNA. Using a 0, 1, 2 system, instead of binary code, the method allows bases to be repeated in a way that incorporates redundancy into the sequence.

‘Generally, in DNA synthesis there should be no room for error but with the application of DNA storage we care more about speed, cost and scale, which means we are prepared to sacrifice accuracy and precision for the sake of these. Our goal is that data are stored 100% correctly but that does not mean the physical polymer has to be 100% correct,’ Lee says. So, the data would be stored, for instance, as ATCG but the DNA polymer would be synthesised as AATTCCGG. This allows for up to 30% synthesis and sequencing errors while the data stored remains accurate. ‘It’s the same concept already seen in hard drives.’

Molecular Assemblies is also using enzymatic DNA synthesis to create a cost-effective DNA storage solution. Using an aqueous-based enzymatic synthesis approach, Efcavitch says the firm has the potential to synthesise DNA data strands 10 to 50 times longer than the 150 nucleotide lengths synthesised through the more common phosphoramidite chemical synthesis method. ‘The older chemical synthesis method requires extensive post-synthesis processing and hence is time consuming and expensive and is not optimal for data storage applications.’

The firm’s approach, which converts the binary code into a two-nucleotide code (A and T bases for odd 0 and 1 bits; G and C bases for even 0 and 1 bits), uses short homopolymers of ACGT to encode a bit instead of a single nucleotide, which creates a different and more speedy process for writing DNA, he says.

Meanwhile, Microsoft, working in conjunction with the University of Washington, is looking to slash costs and streamline the process by creating a desktop-sized device to automate the entire process and remove the human factor. ‘Since our target is to place this technology in a datacentre, it seems impractical to rely on people to move the DNA and fluids needed to manipulate it,’ Microsoft’s Strauss says. ‘Instead we need to automate the entire process. Humans will still be needed to maintain and improve the system, but the tedious tasks are left to an autonomous system.’

As such, the proof-of-concept device contains the software to convert the digital data into bases, the ability to add the required chemicals to synthesise DNA, store the DNA in a special vessel, and microfluidic pumps to deliver the DNA into a sequencer to be read back and converted into binary code. The team successfully stored and retrieved the word ‘Hello’, which represented just five bytes of data and took 21 hours end-to-end. ‘DNA storage is still in the research stage. While we are encouraged by our findings, it is too early to pinpoint a deployment date,’ says Strauss.

The cost of storing data using DNA currently sits at about $1000/megabyte.

Estimates suggest 1g of DNA could store about 200 exabytes – 200m terabytes – of data. Or put another way, the amount of DNA in the human thumb could store the equivalent information on more than a million digital thumb drives.

New horizons

But DNA storage is on the horizon. ‘The exponential increase in the generation of data will force the adoption of DNA-based data storage in the not too distant future,’ Efcavitch says. ‘If done successfully, the total cost of ownership for DNA data storage could eventually become cheaper than current electronic methods of data storage.’ Twist Bioscience, for one, is aiming to reduce the cost to $100/terabyte within five years. But Lee says the future conversation won’t be about cost per byte sequenced but rather the cost for storing the data for several generations.

Indeed, it’s long-term storage – large volumes of data like clinical trial or historical information – that most experts believe will be where widespread DNA storage is at. ‘The future will be people and consumers deciding what data they want to keep and DNA might be a great solution to archive this information. That’s where we think the future is,’ says Lee. Grass believes this could be 10 years down the road still. But he adds DNA storage could also have other applications such as being embedded into objects in as little as five years, he says. ‘Whether this will compete with other forms of storage on the market, I don’t know but it will certainly have its place in many applications,’ he says.

Twist’s Leproust says the physical components of DNA as a storage medium – DNA writing, storage and reading – have been widely demonstrated. ‘The ability to store digital data in DNA seems futuristic, but the future is now,’ she says. ‘Now it is a race to scale the technologies and, in the process, reduce the cost in order to make a commercially viable product.’